Case Study

For many small marketing teams, AI starts as a useful but informal writing assistant. Someone opens ChatGPT, types in a rough prompt, copies the output, edits it manually, and eventually moves the content into a blog, email, ad platform, or CMS.

That workflow can be helpful, but it is rarely dependable.

The quality of the output depends heavily on the person writing the prompt, how much context they remember to include, and how familiar they are with the company’s voice, positioning, audience, and content goals. One person may get a strong draft from the tool, while another may get something generic, off-brand, or unusable. Over time, that inconsistency creates friction for the team.

In this case, the client had already gathered a meaningful amount of company knowledge. They had examples of existing content, guidelines for how the brand should sound, notes on positioning, competitive research, and references from competitor websites. The problem was not a lack of information. The problem was that all of that information lived in different places, and it was not consistently shaping the content being generated.

The opportunity was to turn that scattered knowledge into a practical system: a simple content engine that a marketing team could use without needing to become experts in prompting, AI tools, or technical workflows.

The central challenge was not simply to connect a team to an LLM. Anyone can open a chatbot and ask it to write a blog post. The harder problem was building a workflow that made the model more useful, more consistent, and more aligned with the company’s brand.

For a less AI-native marketing team, the blank prompt box creates too much responsibility. It asks the user to know what context to provide, how to phrase the request, how to structure the output, and how to guide the model toward the right tone. Even if the team has brand guidelines, competitive analysis, and strong examples, those materials do not automatically make their way into the prompt.

That meant the tool needed to do more of the thinking in the background.

The goal was to create an interface that felt simple on the surface, while quietly packaging the right context, prompts, and reference material behind the scenes. The user should not need to think about prompt engineering. They should only need to answer a much simpler question: What would you like to create?

The strategy was to design the system around the marketing team’s actual content needs, not around the technical possibilities of AI.

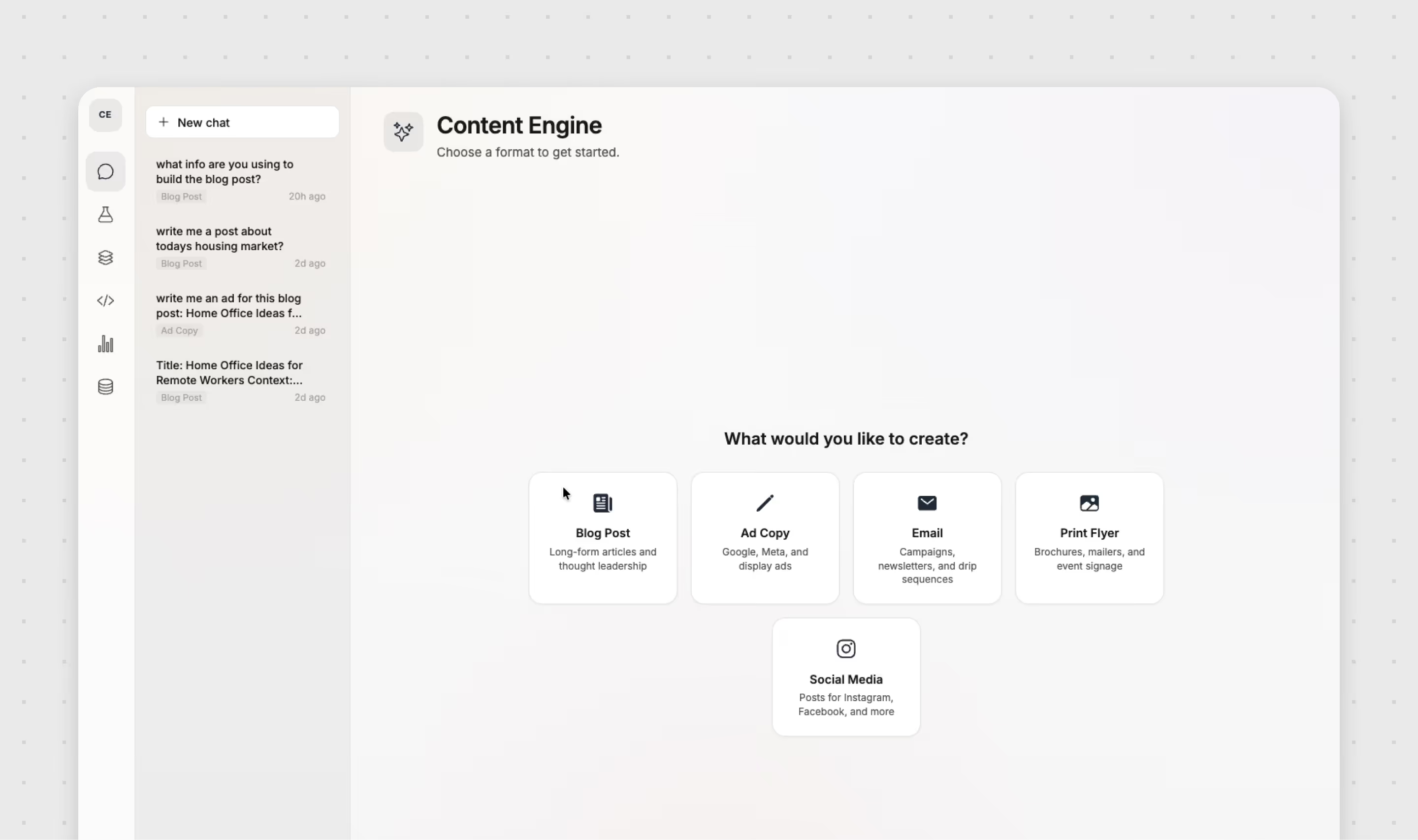

Rather than giving users an open-ended chat interface, the content engine was organized around a small set of common content types. Each one represented a category of work the team regularly needed to produce:

Blog posts for long-form articles and thought leadership

Ad copy for Google, Meta, and display campaigns

Email for campaigns, newsletters, and drip sequences

Print flyers for brochures, mailers, and event signage

Social media posts for Instagram, Facebook, and other platforms

This structure gave the tool a clear starting point. Instead of asking the user to write a detailed prompt from scratch, the interface asks them to choose the type of content they need. From there, the user enters a topic or direction, such as a regional housing construction trend, a campaign announcement, or a product-specific message.

Behind that simple input is a more structured process. Each content type is mapped to a different prompt framework, with its own assumptions about format, tone, audience, and output structure. A blog post needs to be handled differently from a display ad. A print flyer has different constraints than a nurture email. By separating these use cases at the interface level, the system can create more appropriate content without requiring the user to know how to ask for it.

The front end of the content engine was intentionally minimal. The goal was not to impress the user with the complexity of the system. It was to remove friction.

When a user opens the tool, they are presented with a simple screen asking what they would like to create. From there, they select one of the content categories, enter the topic or instruction, and submit the request. The interaction is familiar, direct, and easy to repeat.

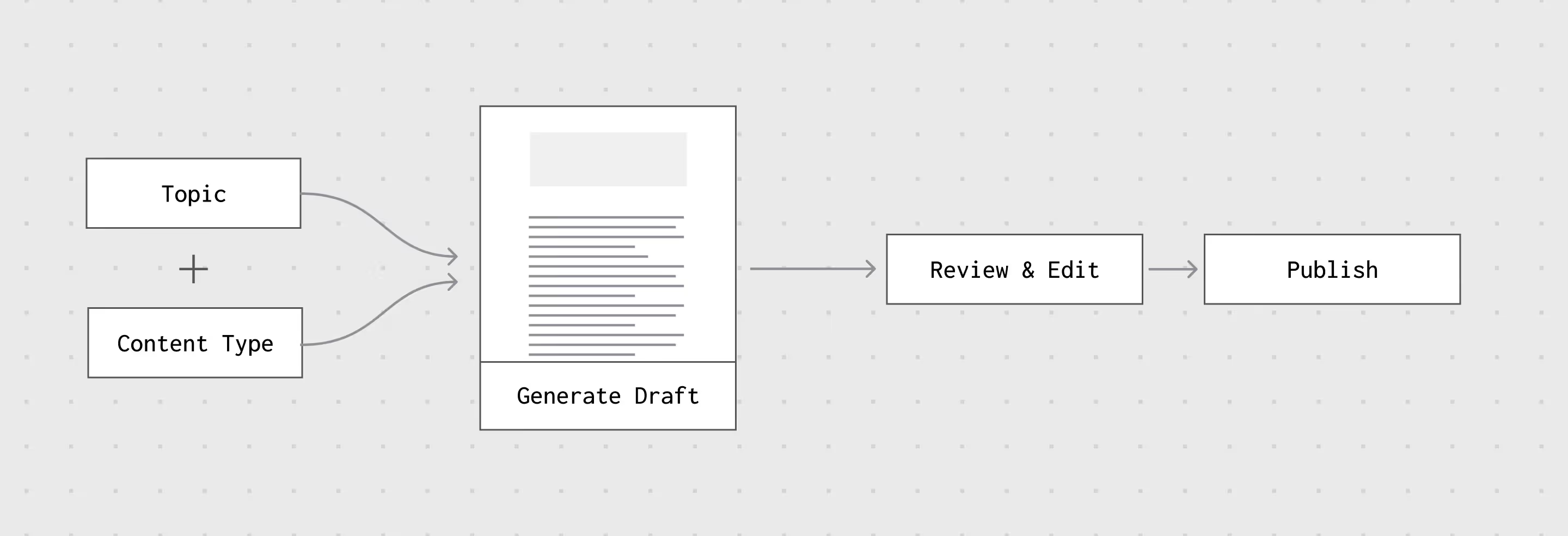

A typical flow looks like this:

That simplicity matters because the audience for the tool is not necessarily made up of AI power users. They do not need a complex interface with dozens of settings, advanced model controls, or technical configuration options. They need a dependable way to get a useful first draft that already understands the company.

The interface acts as a layer between the marketing team and the model. It reduces the number of decisions the user needs to make while increasing the amount of relevant context being sent into the generation process.

Most of the value of the project sits behind the interface.

The content engine was designed to work with a collection of company knowledge, including brand voice guidance, previous content examples, positioning notes, and competitive references. This material gives the model a more complete understanding of how the company communicates and how its content should be framed.

Instead of requiring the user to paste brand guidelines into every request, the system assembles the relevant context automatically. The user’s short prompt is combined with pre-written instructions, content-specific prompt structures, and selected knowledge base references. This allows the model to respond with more awareness of the company’s tone, history, and market position.

The simplified logic looks like this:

Content Type Prompt

Brand Voice Guidance

Existing Content Examples

Competitive Context

Structured LLM Request

This approach shifts the work away from the user and into the system. The marketing team no longer has to remember every rule, preference, and positioning detail each time they need content. Those decisions are built into the workflow.

Because company knowledge changes over time, the content engine needed to be maintainable. It could not be a static tool that worked only at the moment it launched.

The system was built as a front end to Open WebUI, allowing for an admin layer where prompts, knowledge bases, and reference materials can be tuned over time. This gives the client a way to update the system as the company evolves, new campaigns are created, or messaging changes.

That admin layer makes the tool more flexible. Brand guidelines can be refined. New examples can be added. Competitive references can be changed. Prompt structures can be adjusted as the team learns what kinds of outputs are most useful.

This was an important part of the strategy. The content engine was not meant to be a one-off writing tool. It was designed as a repeatable marketing system that could improve as the company’s needs became clearer.

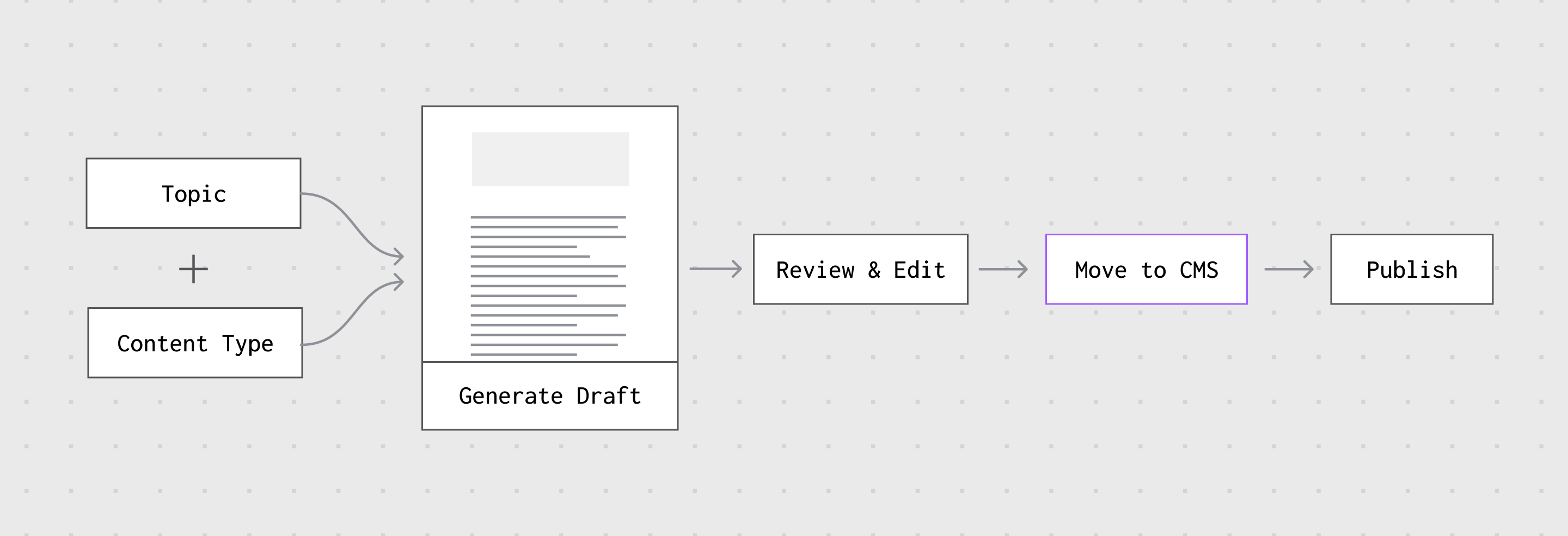

Once the system generates a draft, the content is returned in a structured format, typically Markdown. This makes the output easier to display in the content engine UI, easier to review, and easier to move into the next step of the workflow.

The goal was not to eliminate editing. Strong marketing content still needs review, judgment, and refinement. Instead, the goal was to make the first draft more useful and more aligned from the beginning.

From there, the team can review the draft, make edits, and eventually move the content into WordPress or another publishing destination. This keeps the human review process intact while reducing the amount of time spent getting from a blank page to a workable draft.

In practice, the workflow becomes:

That may sound simple, but it changes the role of AI in the team’s process. Rather than being a loose, unpredictable writing assistant, it becomes a more structured part of the content production workflow.

This project was developed in collaboration with Nick Lavella through System Fabric, a partnership focused on creating custom AI and workflow tools for specific client needs.

The collaboration brought together two complementary skill sets: technical development and marketing strategy. Nick’s development expertise helped shape the application architecture and implementation, while BrandZap focused on the content strategy, brand logic, prompt structure, and practical usability for the marketing team.

That combination was important because the challenge was not purely technical. The success of the tool depended on understanding how marketers actually work, where AI creates friction, and how brand knowledge needs to be translated into usable content systems.

The final solution was a custom AI content engine that turns scattered company knowledge into a simple, repeatable content creation workflow.

Instead of asking the team to become better prompt writers, the system gives them a more guided experience. They select what they want to create, enter a topic, and receive a draft that already reflects the company’s brand voice, content patterns, and market context.

The result is a tool that helps the team produce content across multiple formats while reducing the inconsistency that often comes from one-off AI usage.

Key components included:

A simple content creation interface

Content-type-specific prompt frameworks

A company knowledge base built from brand, content, and competitive materials

Admin controls for updating prompts and knowledge sources

Markdown-based outputs for review and editing

A workflow designed to support eventual publishing in WordPress

The biggest change was not that the team gained access to AI. They already had that.

The real change was that AI became easier to use consistently.

Before, each user had to bring the context themselves. They had to know what to ask, how to ask it, and what brand rules to remember. After the content engine, much of that context was built into the system. The user could focus on the content need, while the tool handled the structure around it.

That made the experience feel less like starting from scratch and more like working inside a branded content system.

For a small marketing team, that kind of structure can make AI feel less experimental and more dependable. It creates a practical bridge between the power of an LLM and the day-to-day needs of a team that simply wants to create better content faster.

AI tools are powerful, but power alone does not make them useful.

For many teams, the real opportunity is not in giving everyone access to more advanced AI features. It is in designing simpler workflows that make the technology easier to use, easier to trust, and easier to align with the company’s existing knowledge.

This project sits directly in that gap. It takes unstructured company information — brand guidelines, past content, competitive research, and positioning notes — and turns it into a practical content engine for everyday marketing work. The result is a system that helps a less technical team get more dependable value from AI without forcing them to become AI experts.